An overview of Reinforcement Learning

1. Introduction

“When it is not in our power to determine what is true, we ought to act in accordance with what is most probable” – Descartes

Besides the development of Computer Vision, Natural Language Processing, or time series prediction; Reinforcement Learning algorithms have also achieved surprising achievements in recent years – the team of DeepMind scientists created AlphaGo played against the world champion in the go or recently the Dota AI won in 5v5 matches with humans.

In supervised learning, each training data is mapped with a similar result, but knowing the results is not how the world works.

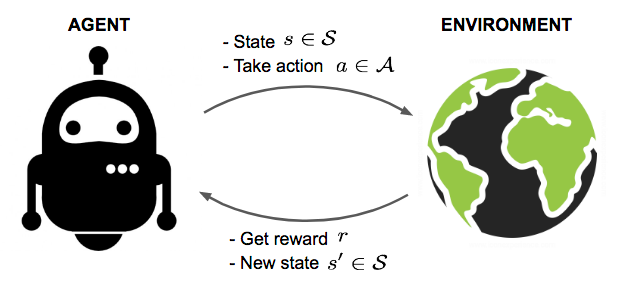

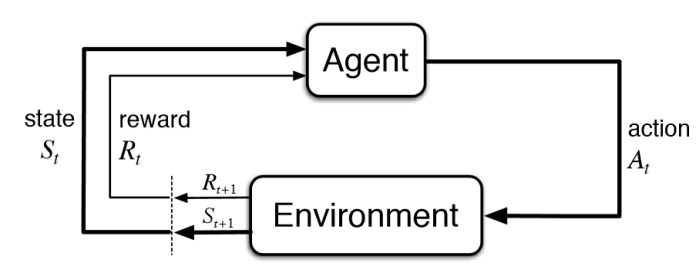

In reinforcement learning (RL) the subject (or Agent – the training object) has no knowledge of the keyword that answers the question but it must decide its action. When there is no training data, Agent must learn from experience, as well as the way we humans often learn. It is understandable that self-study skills are based on false attempts to optimize long-term future benefits.

The reinforcement learning is essentially the process of teaching the subject (Agent) to perform a task well by interacting with the environment and receiving rewards. This way of learning is the same as our human being. For example when it is too sunny, no one wants to go out (because it is very hot – reward negative) or if tonight is a match Vietnam – Curacao, we will come to a coffee for watching (lively atmosphere – reward positive). The reward is the main factor that determines Agent’s actions.

2. The concepts

- Agent : An agent is a object who take actions; for example, the avatar move on the map in game.

- Action (A) : A is a set of all possible ability of Agent in game. In other word the action is interpreted as an Agent’s response to the environment. For example, in the game, the action is to move left and right up and down or to launch moves, …

- Environments : A world that allows Agent to move and identify the state of the environment. The environment is all that influences the Agent and is affected by the Agent. If you is a Agent then the environment is laws of physics you live on its.

- State (or Stage) : State is a “picture” of the environment at a given time and space .

- Reward (R) : Reward is a score that measures Agent’s learning progress, which is similar to a student’s scholarship.

- Accumulative Rewards (accumulated rewards): The total reward accumulated from 1 state to the last state.

- Discount factor: The coefficient of discount is a factor to evaluate the role of rewards received. It is designed to make future rewards less valuable in the past. This is true because a lesson we do so many times its role will decrease.

- Policy: Policy is the strategy that the Agent uses to determine the next action based on the current state.

- Value (V): The expected long-term benefit, in the optimal math, has an equivalent term called the cost function.

- Trajectory: ( Some places called Episode) A series of actions and states that affect that state.

3. Algorithms

As the article title, I will introduce some Reinforcement Learning method without going into mathematics. (This will be the topic for next posts). We need to know that J is a objective function.

- REINFORCE algorithms: based on the use of gradients to update J function

- Actor-Critic Algorithm : The other way to update objective function (using gradient)

- Q-learning : The idea that use different policy to choose the action to achieved optimizer value.

- Deep Deterministic Policy Gradient (DDPG)

- Monte Carlo Tree Search (MCTS) – used in AlphaGo

- …….

4. A “Hello world” program in Reinforcement Learning

The Reinforcement Learning is a field not only in the complexity of algorithms but also requires extremely large computing resources that only leading companies can meet. Fortunately, we have OpenAI Gym.

About OpenAI Gym

OpenAI is a non-profit research company in the field of AI, founded by ALon Musk and Sam Altman. OpenAI Gym is a toolkit for developing and comparing reinforcement learning algorithms. It supports teaching agents everything from walking to playing games like pong or pinball. Gym is an open source interface to reinforcement learning tasks. Gym provides an environment and its is upto the developer to implement any reinforcement learning algorithms. Developers can write Agent using existing numerical computation library, such as TensorFlow or Theano.

You can visit https://gym.openai.com/

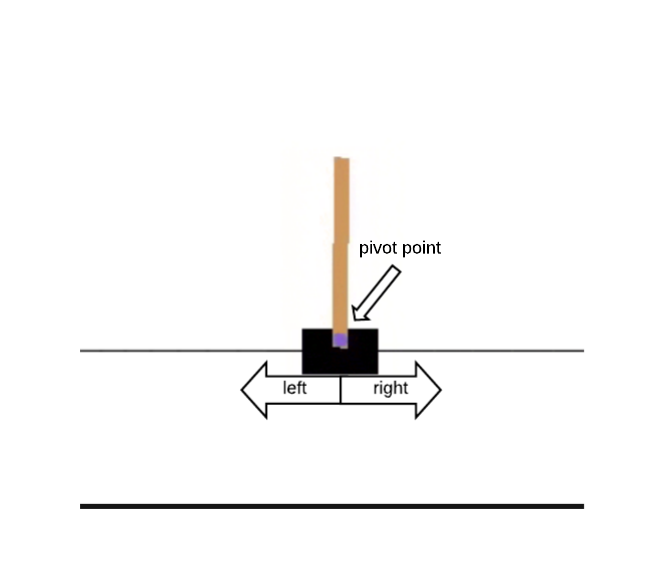

CartPole Game

Since there is not much time I will not present this session (maybe later) , you can download this code at https://drive.google.com/open?id=1zlcxijCSA2I5ZuqV-rWWJ0JXo2ORMprg to experience (supported jupyter notebook)

5. Conclusion

In general, algorithms do not have too many changes, so Reinforcement Learning is a battle of data and computing resources.

On my first blog, i want to send a thank for Mr.DuyAnh for supported. If you have a question please comment below to exchange knowledge.

The finally is an video so interesting about how Agent learn to walk, watch it:

——————————————————————-

Reference:

https://skymind.ai/wiki/deep-reinforcement-learning

[book] http://incompleteideas.net/book/bookdraft2017nov5.pdf