Recurrent Neural Networks — Part 1

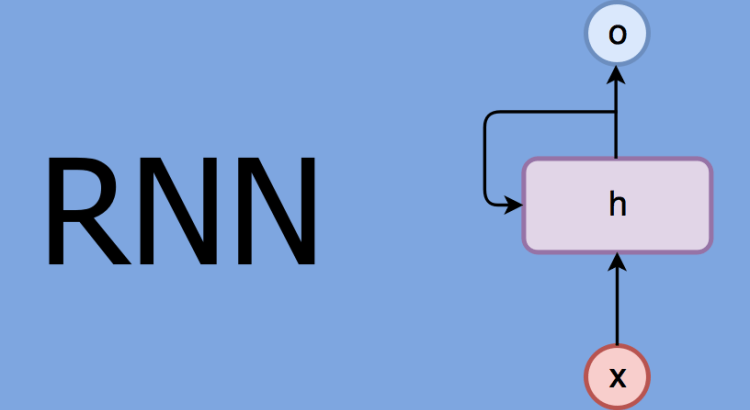

The neural network architectures such as multi-layers perceptron (MLP) were trained using the current inputs only. We did not consider previous inputs when generating the current output. In other words, our systems did not have any memory elements. RNNs address this very basic and important issue by using memory (i.e. past inputs to the network) when producing the current output.

RNN Introduction

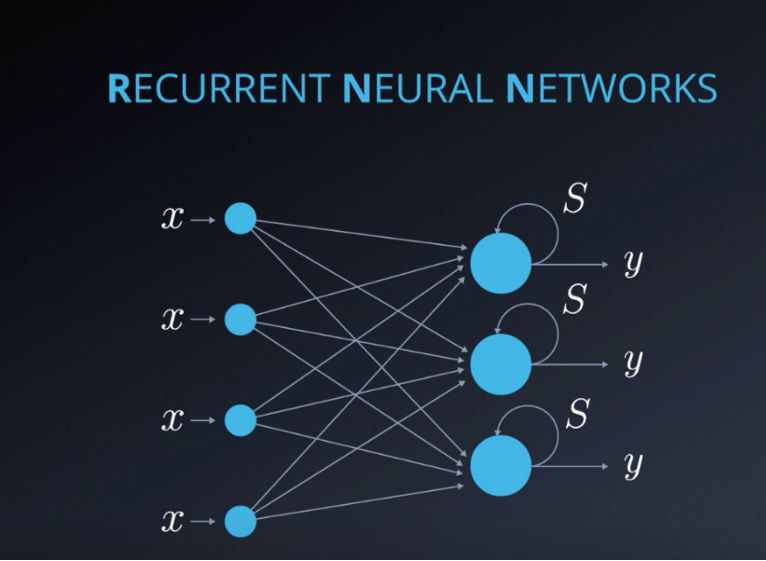

RNNs are artificial neural networks that can capture temporal dependencies which are dependencies over time

if you look up the definition of the word recurrent you will find that it simply means occurring often or repeatedly. So, why are these networks called recurrent neural networks? It’s simply because with RNNs we perform the same task for each element in the input sequence.

RNN History

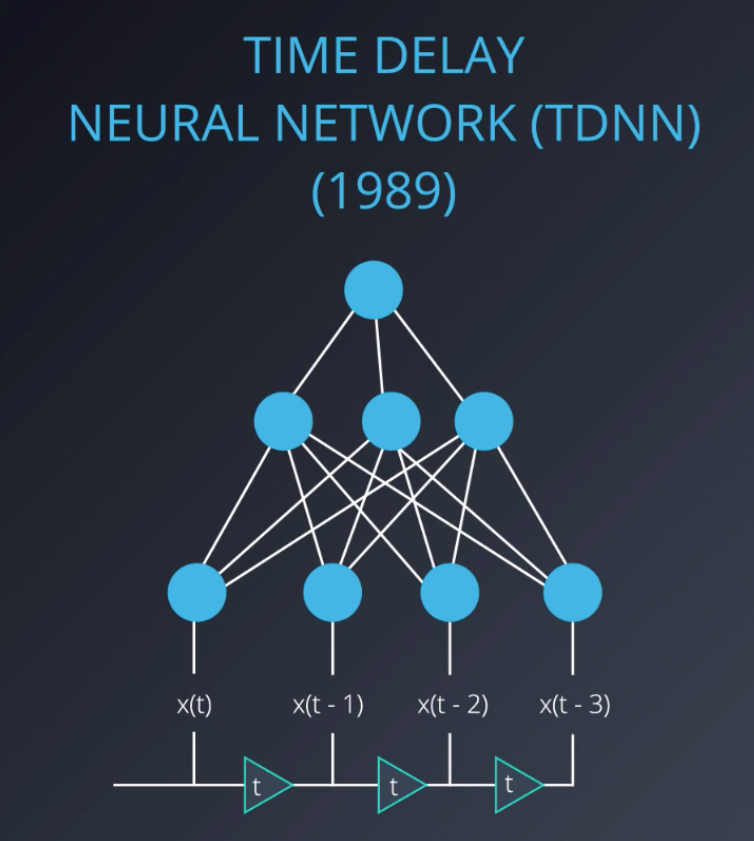

The first attempt to add memory to neural networks were the Time Delay Neural Networks, or TDNNs in short. in TDNNs, inputs from past time-steps were introduced to the network input, changing the actual external inputs. This had the advantage of clearly allowing the network to look beyond the current time-step, but also introduce to clear disadvantage, since the temporal dependencies were limited to the window of the time chosen.

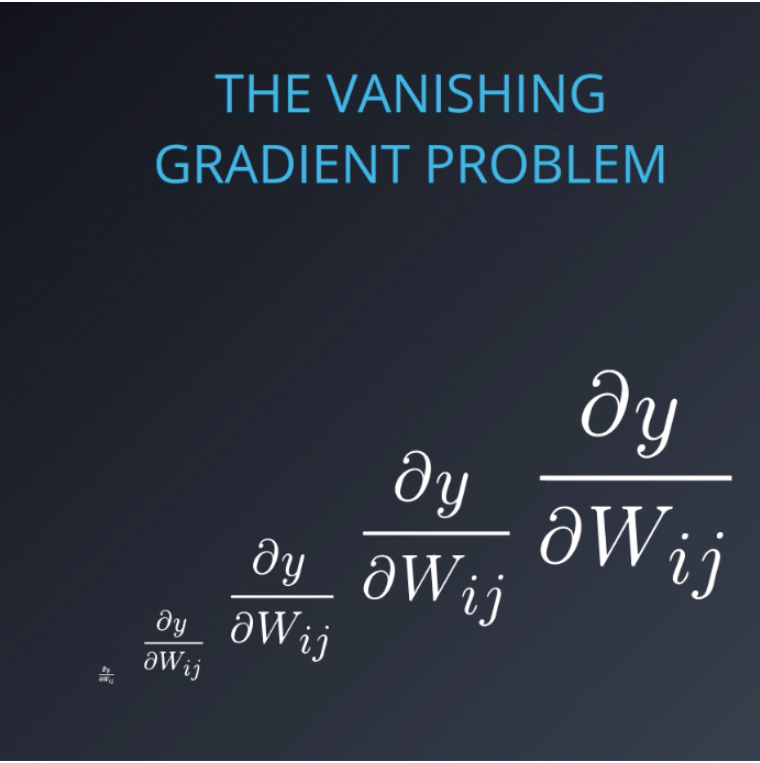

Simple RNNs, also known as Elman networks and Jordan networks, were next to follow. We will talk about all those later. It was recognized in the early 90s that all of these networks suffer from what we call, the vanishing gradient problem, in which contributions of information decayed geometrically over time. So, capturing relationships that spanned more than eight or ten steps back was practically impossible.

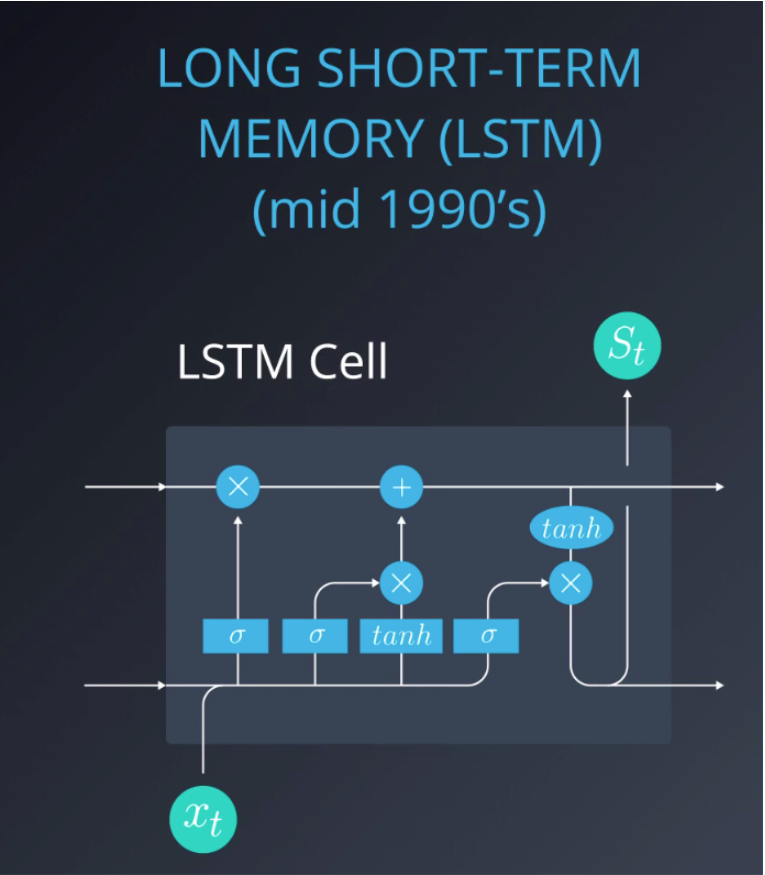

Despite the elegance of these networks, they all had this key flaw. In the mid 90s, Long Short-Term Memory cells, or LSTMs in short, were invented to address this very problem. The key novelty in LSTMs was the idea that some signals, what we call state variables, can be kept fixed by using gates, and re-introduced or not at an appropriate time in the future. In this way, arbitrary time intervals can be represented, and temporal dependencies can be captured.

LSTM is one option to overcome the Vanishing Gradient problem in RNNs.

Please use these resources if you would like to read more about the Vanishing Gradient problem or understand further the concept of a Geometric Seriesand how its values may exponentially decrease.

If you are still curious, for more information on the important milestones mentioned here, please take a peek at the following links:

- TDNN

- Here is the original Elman Network publication from 1990. This link is provided here as it’s a significant milestone in the world on RNNs. To simplify things a bit, you can take a look at the following additional info.

- In this LSTM link you will find the original paper written by Sepp Hochreiter and Jürgen Schmidhuber.

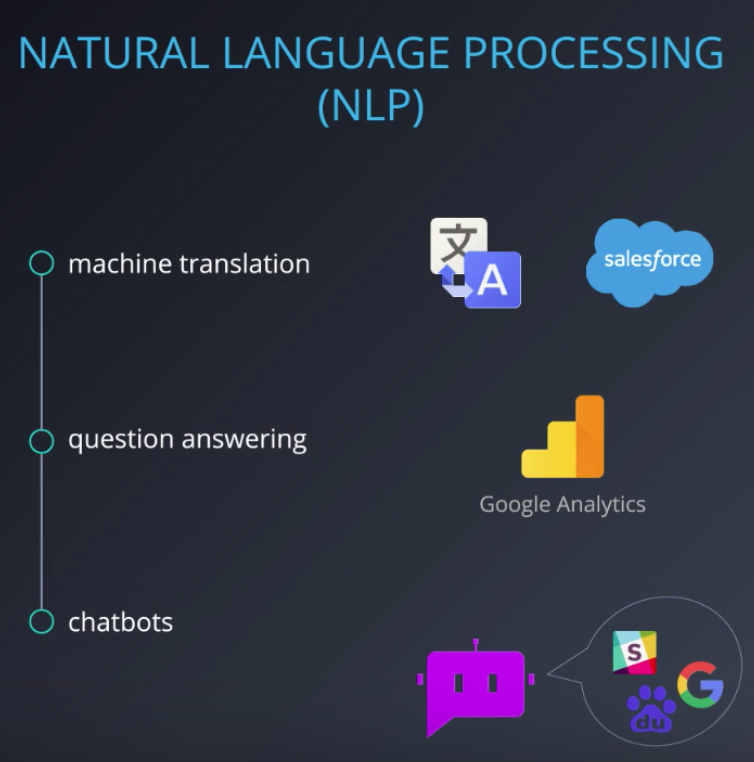

RNN Applications

The world’s leading tech companies are all using RNNs and LSTMs in their applications. Let’s take a look at some of those. Speech recognition, where a sequence of data samples extracted from an audio signal is continuously mapped to text. Good examples are Google Assistant, Apple’s Siri, Amazon’s Alexa, and Nuance’s Dragon solutions. All of these use RNNs as a part of their speech recognition software.

Time series predictions, where we predict traffic patterns. On specific roads to help drivers optimize their driving paths, like they do in Waze,or predicting what movie a consumer will want to watch next, like they do in Netflix. Predicting stock price movements based on historical patterns of stock movements and potentially other market conditions, that change over time. This is practiced by most quantitive hedge funds.

Natural Language Processing or NLP in short, such as machine translation used by Google or Salesforce for example. Question answering like Google Analytics, if you’ve got a question about your app, you’ll soon be able to ask Google Analytics directly. Many companies such as Google, Baidu, and, Slack are using RNNs to drive their Natural Language Processing engines for dialogue engine.

There are so many interesting applications, let’s look at a few more!

- Are you into gaming and bots? Check out the DotA 2 bot by Open AI

- How about automatically adding sounds to silent movies?

- Here is a cool tool for automatic handwriting generation

- Amazon’s voice to text using high quality speech recognition, Amazon Lex.

- Facebook uses RNN and LSTM technologies for building language models

- Netflix also uses RNN models — here is an interesting read

2 Comments

Language Models · June 2, 2019 at 11:27 pm

[…] Recurrent Neural Networks — Part 1 […]

Language Model and Text Generation using Recurrent Neural Network · June 10, 2019 at 2:22 am

[…] can take a look at a more detail explanations for RNN, LSTM in the following posts:Recurrent Neural Networks — Part 1Recurrent Neural Networks — Part […]